At Propylon, we work with clients across the legal and regulatory sector – from US state-level government to large multinationals. One of the interesting things I’ve found is that the challenges faced by these organizations are so similar to those encountered by programmers and engineering teams.

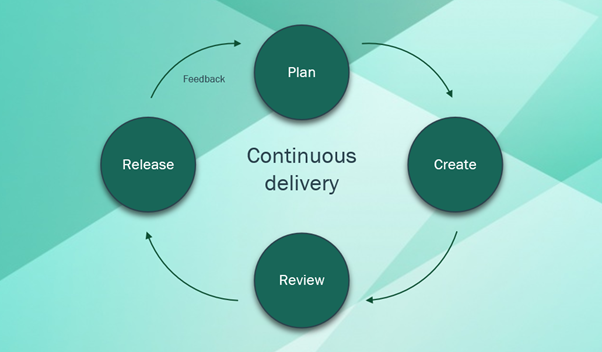

In engineering teams, we invest heavily in tooling to enable us to effectively manage and review large codebases, with teams often distributed around the world. We implement continuous delivery and continuous integration patterns and pipelines to ensure we can quickly implement, build, and release software updates in the most efficient way possible while adhering to our own quality standards.

The 2019 state of DevOps survey found that organizations that implemented continuous delivery methods in their software lifecycle were twice as likely to meet or exceed their performance goals. It can’t be understated how much of an impact the implementation of continuous delivery methods and tools can have on an organization.

Similar needs, similar challenges

In the legal and regulatory sector, content management teams have the same need as engineering teams – to manage and release (publish) large content sets in an efficient way, ensuring quality and accuracy are maintained.

These content sets are often large policy manuals, each of which implements a set of regulatory guidance or requirements. In the past, these content sets would have been distributed as physical books. But more recently, they have been digitized and distributed as PDFs or other digital formats.

Often these content management teams encounter publishing challenges because their tools are built to support a legacy model – one which is optimized for book publishing. Engineering teams have experienced similar challenges in the past when distributing software via physical mediums.

In content publishing, which for many organizations has evolved from paper-based publishing, we see a large up-front effort in developing the content followed by a large publish event. Iteration is slow and expensive, and issues are hard to fix after the fact. Because of this, significant effort is invested in ensuring content is heavily reviewed – double and triple-checked for errors before finally hitting publish.

Compare this to software delivery. In the software world, especially in the context of the web, the processes and tools are designed to allow for on-going changes and updates. There is a low upfront effort, and many publish events.

Iteration is constant and accounted for in the core processes. While there are still quality measures in place such as code review and testing, any issues identified after release can often be fixed in a matter of minutes.

In my experience in working with these teams, many of the challenges being encountered can be solved by implementing the right tools and processes to enable a model of continuous delivery. That is, removing the large, infrequent publishing events, and instead adopting a more frequent, rolling release pattern.

Key considerations

Build in flexibility

Provide the right information at the right time

The tools used to manage, review, and publish content should surface the relevant information at the right time to enable users to get on with the job at hand.

Every minute spent looking for information on what happened to a piece of content since the last review, or trying to find other cited or referenced content – this is time lost for the user who should be focusing on doing what is required of them at that moment, be it a review, writing a report, publishing, etc.

Consistency and reliability

Auditability

- Who made this change?

- When was it made?

- What was the context?

- Who had reviewed it up until that point?

- Were there any comments added during the review stage?

It should be crystal clear how content has ended up in its current state.

The audit trail adds a safety net – it demonstrates exactly how your content has come to be in its current form, who has had eyes on it, and who has approved it. It takes the assumptions out of the process and enables fact-based decision-making.

It also enables rollback of change. If a change ends up being problematic or incomplete, it is very clear what exactly needs to be reverted – inspect the audit trail and revert the relevant entries. Of course, reverting changes should also be recorded!

One other benefit of a detailed audit trail is that it enables retrospective analysis of the entire process. It demonstrates how long each stage of a workflow took – how many changes arose from the review stages, the length of time between final review and final approval, etc. These insights enable teams to adapt their processes to remove roadblocks and introduce efficiencies which they otherwise may not have thought to implement.

Every minute spent looking for information on what happened to a piece of content since the last review, or trying to find other cited or referenced content – this is time lost for the user who should be focusing on doing what is required of them at that moment.

Toward a continuous delivery model

In summary, the tools used to manage and publish content play a huge part in how efficient the end-to-end process can be. Whether that content is source code or legal and regulatory content, adopting the right tools can enable teams to move towards a continuous delivery model.

They’ll see benefits like reduced turnaround time for changes, simplified workflows, and increased organizational performance. In short, a continuous delivery model allows organizations to focus less on processes and more on their business.